Based on E-Book by Antonio Gulli – A senior Google Engineer.

Pre Introduction : This Blog post stands on the shoulders of giants – Specifically Antonio Gulli – whose masterclass on Agentic Artificial Intelligence transformed my understanding of how structured prompt chaining can eliminate the ‘hallucination hurdle’.

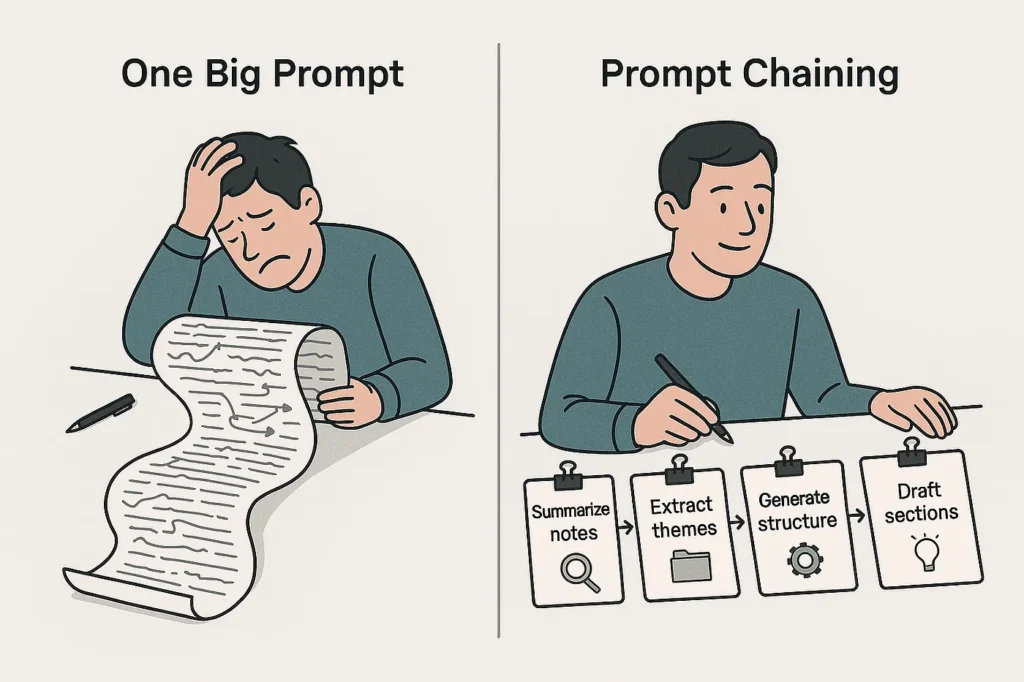

Introduction : Stop thinking of Artificial Intelligence as a “Magic Box” and start seeing it as a high – stakes assembly line. In the early days of Generative AI, we were obsessed with the “Mega prompt” – a wall of text where we begged the model to be a researcher, a writer, and an editor all at once. The result? often a “Jack of all trades, master of none” output that lacked depth and precision.

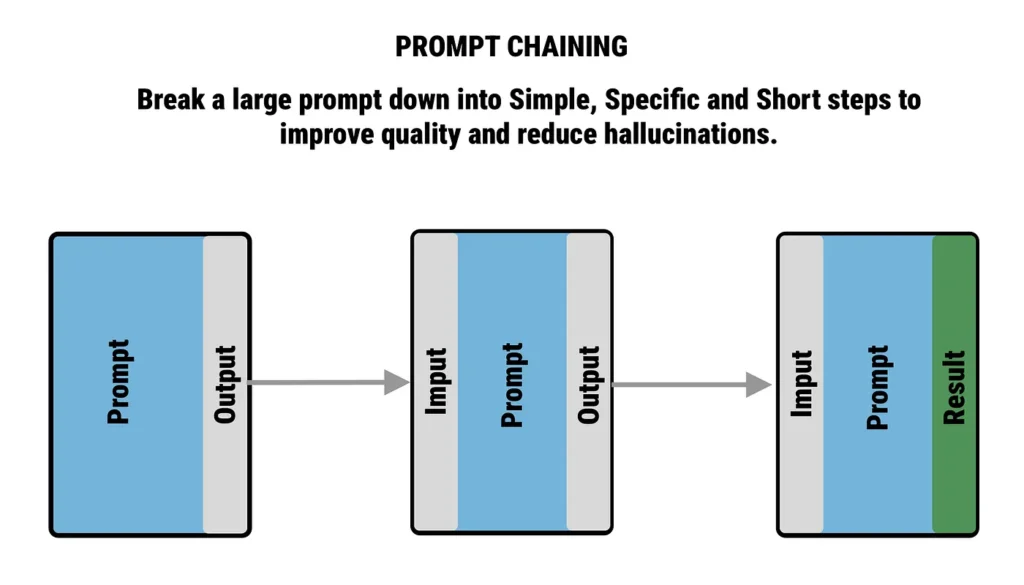

Prompt Chaining is the sophisticated antidote to that chaos. It is the art of decomposing a complex goal into a sequence of surgical, interconnected strikes.

- The “divide and conquer” philosophy :-

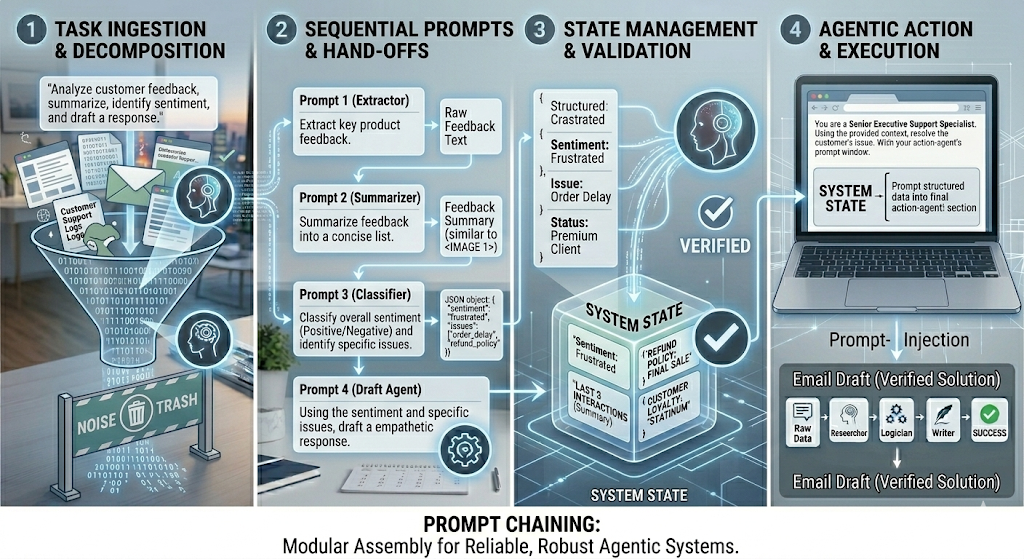

- In the world of Agentic AI, prompt chaining is the foundational pattern that transforms a raw model into sophisticated agent.

- Instead of overwhelming an LLM, we break that task into a sequence of smaller, manegeable sub-problems.

- Also known as pipeline pattern, prompt chaining represents a powerful paradigm for handling tasks when leveraging large language models (LLMs).

- By breaking a “daunting” problem into sub-problems, we can reduce the cognitive load on the model, allowing it to focus its full attention on a narrow, specific objective.

- The chain refers to the passing of state.

- The output of step A isn’t just a result – It is the fuel for step B.

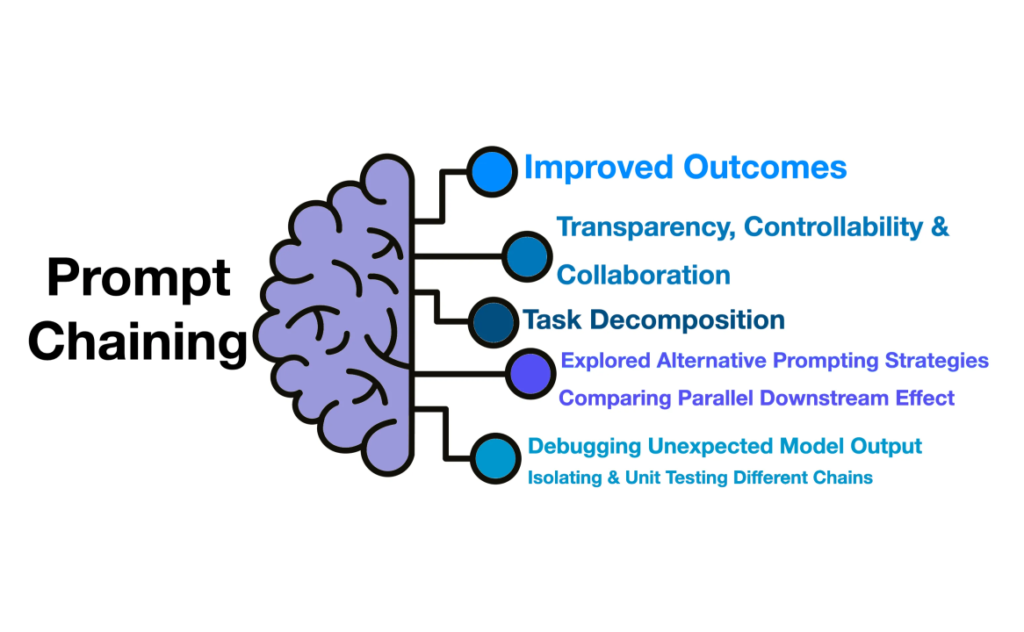

- This doesn’t just improve accuracy; it introduces;

- Reasoning over response : Each step allows the agent to “think” before it acts.

- Structured control : Gain ability to validate, filter and refine the AI’s logic at every link in the chain.

- Reliability : By isolating specific tasks, we eliminate the “hallucination fog” that often plagues complex, single – prompt requests.

- As the model generates a long response, it can lose its “logical north star”. The further it gets from the initial instruction, the more likely it is to deviate from the original intent.

- In a single – shot response, a small mistake in the first paragraph acts as a faulty foundation. Every subsequent sentence then amplifies that initial error.

- Complex queries often require the model to hold vast amounts of information In its active “memory”. If the window is stretched too thin, the model lacks the “room” to process a high – quality response.

- When the cognitive load is too high, the LLM’s probability of “hallucinating” facts increases as it struggles to balance logic, data extraction and creative formatting simultaneously.

This is the equivalent of moving from an unorganized “to-do” list to a professional assembly line. By breaking a task into a linear sequence, we can ensure that AI never moves to step 2 unless and until step 1 is verified and solid.

Below is a practical, real-world example of how this enhances reliability.

The Travel Architect chain : Instead of one giant request, we break the task into 3 logical links

- The Researcher (Data Extraction) :

- Input : Destination (Tokyo) + Interests (Food/History).

- Task : Identify 6 top-rated historical sites and 6 highly-reviewed restaurants near those sites.

- Reliability win : The AI focuses solely on finding facts. It doesn’t worry about “Itinenary / Email” yet which prevents it from ignoring specific dietary constraints.

- By isolating the extraction of Tokyo + Food/history into its own step, system ensures that subsequent agents (like the planner / writer) are working with a grounded, verified dataset.

- The Logician (Structural Planning) :

- Input : List of 12 locations from Link 1

- Task : Group locations by neighborhood to minimize travel time and assign them to day 1/2/3.

- Reliability win : By separating this step, the AI can double check the geography. It ensures you aren’t eating lunch in the north and visiting a temple in the south 10 minutes later.

- The Architect acts as a critical bridge between raw information and the final output.

- By isolating structural planning, the system ensures the final itinerary is physically and logically possible, preventing common AI failures.

- The Writer (Formatting and Tone) :

- Input : The optimized 3-day schedule from link 2.

- Task : Rewrite the schedule into a “friendly email” format for the user.

- Reliability Win : Since hard thinking (data and logic) is already done and verified, AI can dedicate 100% of its creativity to the tone and style.

- The writer or synthesizer acts as the final quality control layer, focusing exclusively on communication excellence.

- The writer’s primary mission is to transform validated, structured data into a user facing narrative that adheres to specific brand guidelines or user preferences.

In the architecture of Agentic AI, if prompt chaining is the “conveyor belt”, then structured output is the “standardized container”.

Structured Data (usually JSON) : This is a foundational element in Agentic AI, enabling AI agents to convert chaotic/unstructured information into machine – readable formats, which allows them to reason, plan and take autonomous actions within workflows.

Practical Example : The “E-commerce support Agent”. Imagine an agent tasked with processing a customer return. A monolithic prompt might get confused, but a structured chain makes it footproof.

- The classifier (structured output) : The agent reads the customer’s email and is forced to output a JSON object.

- Prompt : “Classify this email. output ONLY JSON”.

- The output :

- The classifier’s Job is to take unstructured human input and immediately translate it into a clean, machine-readable JSON format.

- This 1st step turns a vague request into precise digital command that the rest of the AI team can follow without confusion.

- This also acts as a bridge between human language and computer code.

- Here, the AI actually thinks and plans. It takes the structured data from step 1 and applies a set of rules of reasoning to decide what to do next.

- It decides which external tools to call-like searching a database, checking a calendar or calculating a price.

- It ignores irrelevant noise and focuses only on the specific logic needed to solve the user’s problem.

3. The response Architect (The Final Link) : If the urgency is low, step 2 passes that JSON to the final agent to draft a reply. Because the agent knows the order_id and intent are verified, it doesn’t have to “hallucinate” or search the text again. It just builds the response using the keys provided.

- This is the “customer service” layer. It takes the cold, hard data from the logic step and translates it back into Human language.

- It converts technical JSON results into a friendly, helpful sentence.

- It double checks that the original question was actually answered before sending the message to the user.

- Information processing workflows : These are the strategic blueprints that define how data travels through an AI system. They transform an AI from a reactive responder into a deliberate information refinery.

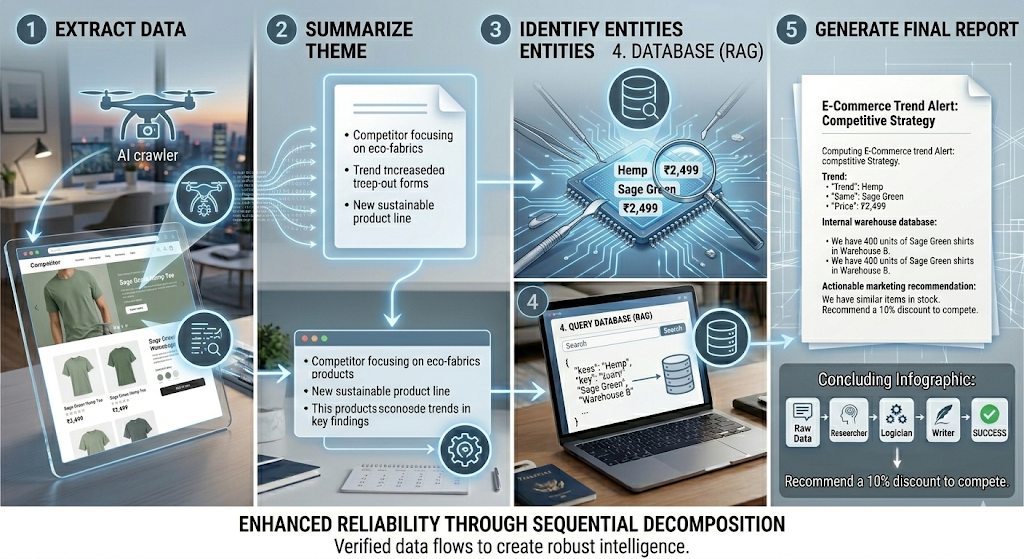

Let’s look into a scenario which depicts how a chaotic pile of information is refined into high-value asset through a structured chain.

- Step 1 (Extract) : The agent pulls text from a competitor’s new “spring collection” landing page.

- Step 2 (Summarize) : It identifies the core theme : “A shift toward sustainable hemp-based fabrics and earthy color palettes.”

- Step 3 (Entities) : It extracts specific data : “Hemp”, “Sage green”, and price points like 2499 rupees.

- Step 4 (Search) : It searches the company’s internal inventory to see if they have any “sage green” or “Hemp” products currently in stock.

- Step 5 (Report) : It sends a Slack message: “Competitor X launched a Hemp line at 2499 rupees (Summary/Entities). We have 400 units of similar sage Green shirts in warehouse B (Search Result). Recommend a 10% discount to compete.”

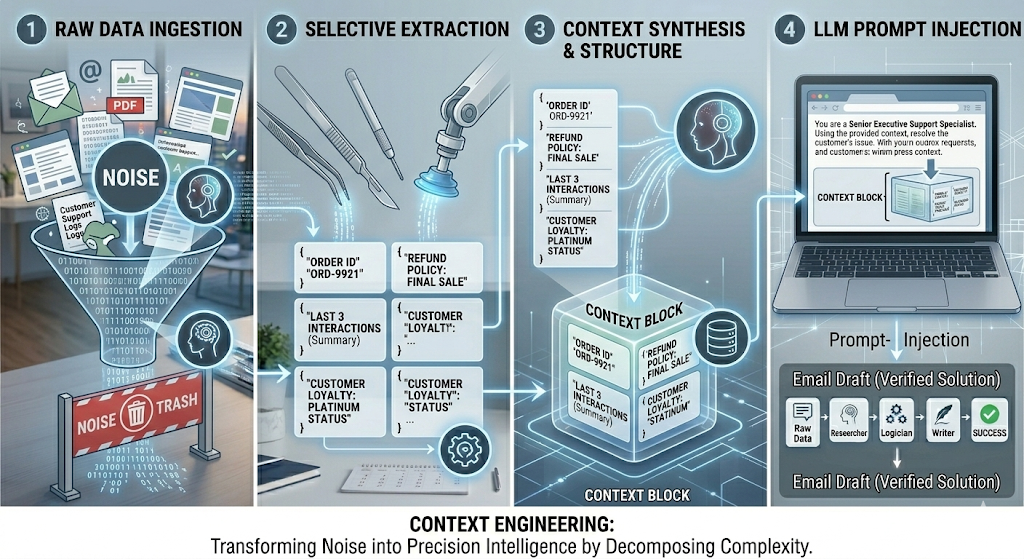

Context Engineering (The Briefing folder) : This is the pile of documents you hand to the consultant before they start. Even the smartest consultant can’t give you a risk assessment if they don’t know your company’s bank balance, your suppliers names, or your past failures. In AI, this means providing the exact, relevant data the model needs to process.

Prompt Chaining (The Instruction) : This instructs how to speak with the consultant. If you say, “Help me with my business”, They’ll give you a blank stare. If you say, “I need a 5-page financial risk assessment focusing on our Q3 supply chain, written in a professional tone”, You’ve engineered a great prompt. It’s about clarity, persona and constraints.

Prompt chaining is the bridge that connects the two – it uses one prompt to “clean” the context for the next prompt.

Part A : Context Engineering (The Sifter) – Instead of dumping the entire customer history (100+emails) into the AI, we “engineer” the context.

- The Action : A script searches your database and pulls only:

- The last 3 interactions.

- The customer’s current “Loyalty status”.

- The specific “Refund policy” for their product.

- The Result : A “Context block” that is lean and relevant.

Part B : Prompt Engineering (The Director) – Applying highly specific instruction to that context.

The prompt : “You are a senior Executive support specialist. Using the provided Context block, resolve the customer’s issue.”

- Constraint 1 : Do not offer a refund if the policy says ‘Final sale’.

- Constraint 2 : if the customer is ‘platinum status’, offer a 500rs credit regardless of the outcome.

- Format : Output a ‘Internal Reasoning’ section followed by the ‘Email Draft’.

Prompt Engineering tells the AI how to think; Context Engineering tells the AI what to think about . The best agents don’t just have high IQ – they have a high-quality ‘information Diet’ and a clear ‘chain of command’.

As we close this chapter on Prompt Chaining, it’s clear that we are witnessing the end of the “Black Box” era. For years, we treated AI like a digital oracle – toss in a question, pray for an answer. The future of Agentic AI isn’t found in a single, smarter prompt; it’s found in the architecture of the sequence.

Prompt Chaining is the DNA of Reliability. It transforms the erratic “Spark” of an LLM into a steady, industrial-grade light. By breaking down the daunting into the doable, we don’t just solve problems – we build logic.

In the world of Agentic AI :

- One prompt is a conversation.

- A Chain of prompts is a system.

- A system of agents is a solution.

We are no longer just using AI; we are choreographing intelligence.